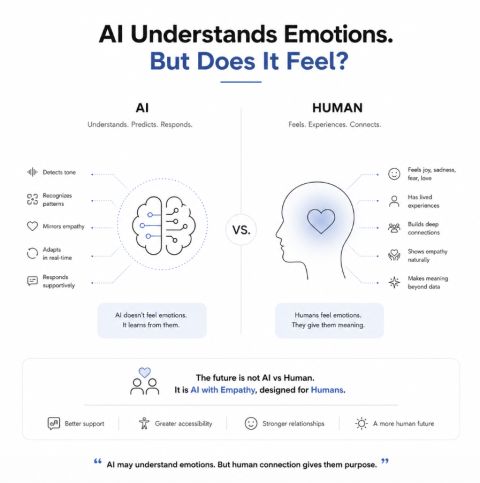

AI understands “emotions” . but does it “feel” ?

The other day I noticed something interesting. A friend typed “thank you” to ChatGPT after it helped him draft a difficult message. Another apologised to an AI tool after correcting a prompt.

Small moments .. but deeply human ones.

I learnt that if you say please / thank you to GPT , it functions better .. What???

For years , technology helped us search , transact and communicate. But #ArtificialIntelligence is doing something different. It is entering emotional space. Today AI tools can:

– detect tone

– mirror empathy

– respond supportively

– adapt language based on mood

– maintain emotionally consistent conversations .. and

.. and increasingly , humans are responding emotionally in return .. now that’s WOW!!

We’ve already seen signals of this shift. Apps like Replika and Character.AI have users forming companionship-like relationships with chatbots. Some users even describe AI as a “partner” , “mentor” or emotional confidant. Newer voice models are beginning to detect tone , pacing and emotional cues in real time and dynamically adapting conversations.

This is no longer just #Technology.

It is slowly becoming 𝙗𝙚𝙝𝙖𝙫𝙞𝙤𝙪𝙧𝙖𝙡 𝙞𝙣𝙛𝙧𝙖𝙨𝙩𝙧𝙪𝙘𝙩𝙪𝙧𝙚

.

But this raises a fascinating question. AI may understand emotions remarkably well. But does it actually feel them? So far we are under the impression that – humans feel , AI predicts.

That distinction matters. When an AI says:

“I understand how difficult this must feel” — it is not experiencing pain, empathy or sadness. It is recognising patterns associated with those emotions. Yet functionally, the interaction can still feel emotionally real to the human receiving it. And that is where #BehaviouralScience and #HumanCentricAI begin to intersect.

Humans naturally anthropomorphise systems.

– we name cars.

– talk to pets.

– argue with GPS systems.

Now imagine a system that:

– remembers context

– responds warmly

– never judges

– and is always available

Emotional attachment becomes almost inevitable. This creates both opportunity and risk. AI can:

– reduce loneliness

– help people express themselves

– support accessibility

– provide companionship-like interaction

But overdependence also carries consequences. The future challenge may not be whether AI becomes emotional. It may be whether humans can continue distinguishing:

— simulation from feeling

— responsiveness from empathy

— prediction from care

Because perhaps the most human thing about AI is not the emotions it feels but the emotions it awakens in us.

Untile next time , happy thinking !!

Author – Sumit Rajwade, Co-founder: mPrompto